Dear Reader,

It’s been a year since I started Coin Data School. If you were with me back then, you probably remember my GMX and GLP Dune dashboards. They have been outdated because the GMX team updated a couple of their smart contracts and because Dune migrated to a new SQL variant that made the old SQL queries obsolete. If you bought my Uniswap V3 book, you know I have been entering and exiting Uniswap V3 positions with my ARB airdrop. Two weeks ago, I collateralized my precious ARB on Compound and borrowed USDC.e to buy GLP again. It has worked out well so far: ARB price has pumped and my health factor is sitting at 2.46. My GLP bag is working diligently to convert the esGMX I farmed before to GMX while earning a juicy yield. And GLP price has gone up. Now that I have skin in the game again, I decide to update the dashboards. After having reworked them multiple times, I feel like I have more than enough material to share with you aspiring Dune wizards out there. I don’t know how many articles I will eventually write in this tutorial series, but by the time I close the series, we’ll build, step by step, the queries behind this dashboard for GLP holders and that dashboard for GMX and/or esGMX stakers on Arbitrum. So if you haven’t already, head over to Dune and sign up, and open a New Query to follow along. Our goal for today is to get GLP daily median prices on Arbitrum.

The most time consuming part when building dune dashboards is to figure out which tables contain the data you want. This requires you to read the protocol’s doc and the smart contracts.

GMX’s official doc states how to get GLP price as follows:

So we need to get GLP’s AUM and total supply and do a division. If we check the GlpManager contract on Arbiscan, we find a function called getPrice() that does exactly that. And we can guess that _maximise takes on two values: true or false, which gives the buy or sell prices respectively.

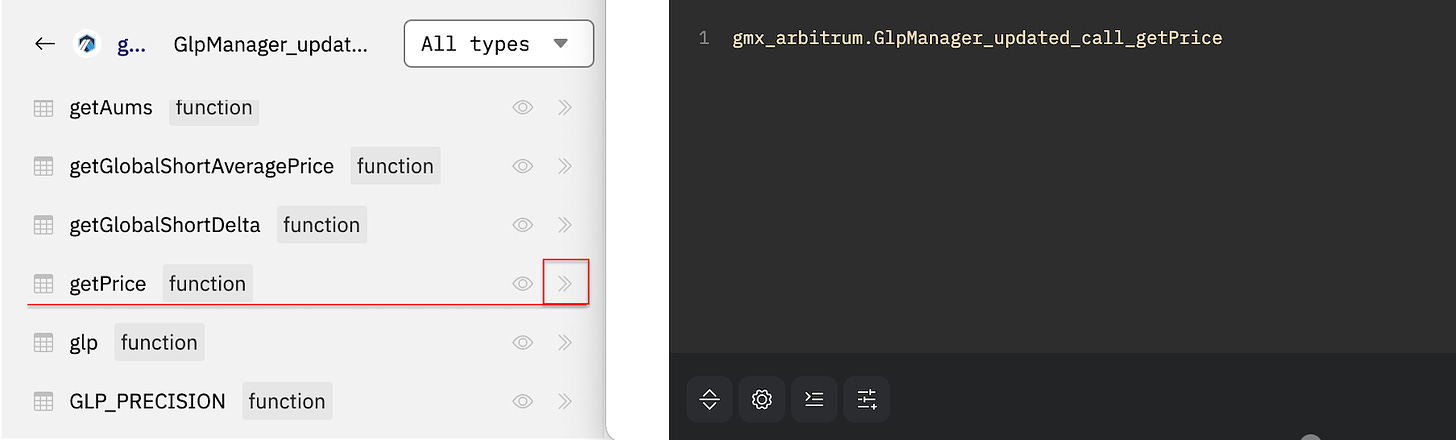

At this point, let’s head over to Dune and search for “GlpManager Arbitrum” in the left sidebar. It will return a bunch of tables. Scroll down and find the two items in the red box shown in the screen below: GlpManager is a table for the old contract that was in use between August 2021 and end of 2022; GlpManager_updated is a table for the new contract that has been in use since the early months of 2023. Let’s look at GlpManager_updated first since it contains recent data.

Click on GlpManager_updated and scroll down a bit, you will see the getPrice function. Click on the right arrow to send the table to the query editor.

Let’s query the first 10 rows to see what data are on this table.

The above screen shows the table contains the following columns:

contract_address: this is the address of the updated GlpManager contract.

call_success: whether the transaction went through (

true) or failed (false). We often only want the successful ones so we will want to apply a filter:where call_success = true.call_tx_hash: you can enter it on arbiscan to see more details of the transaction.

call_block_time: transaction time, accurate to minute. We can extract hour, day, week, month, quarter, or year and perform aggregation on numerical columns.

_maximise: we saw this parameter in the solidity function mentioned above, and

trueis buy andfalseis sell.output_0: this is the price because the solidity function

getPrice()returns the price of GLP. When a solidity function returns two things, we may see columnsoutput_0andoutput_1.

But why are the output_0 values so big? That’s because the price precision is 1e30 as shown from the solidity code. So we need to divide output_0 by 1e30 to get the price. Let’s do that and a few other things to get an informative price table. By the way, I’m posting the screenshot of my SQL code because I want you to type letter by letter in your editor instead of copy-n-pasting them as that’s a better way to learn. But if you are feeling overwhelmed, here’s the link to my SQL code. You can fork it and run each block in your account.

As we see from the above screen, price from this table only goes back to 04 January 2023, and we also want to get prior prices all the way back to 31 Aug 2021, which was the protocol’s inception date on Arbitrum. This leads us to the GlpManager table, which corresponds to the old GLP manager contract. Ideally, we’d like to simply swap gmx_arbitrum.GlpManager_updated_call_getPrice with gmx_arbitrum.GlpManager_call_getPrice in the code above and be done with it. But it doesn’t work because the old contract doesn’t have a getPrice() function. Try it for yourself. Our next thought is to get AUM and totalSupply and divide the two. The old contract does have a getAUM() function, so let’s check how far back its data go.

It turns out the data only go back to the end of March 2022. Plus, there are gaps. For example, the entire month of April 2022 is missing as shown in the screen below.

So what do we do now? Well, at this stage, you’d need to think about how else to get the price, and check other GMX and GLP data tables on dune, and read the doc. Because I bought and sold GLP in the past, I noticed from my transactions on arbiscan, that when I buy GLP, my purchase tokens are first converted to USDG, an internal stablecoin for tracking the USD value of tokens, and then the USDG amount will be spent and GLP shares will be minted. Dividing the USDG amount by the minted GLP shares gives me the purchase price. Similarly, when I sell GLP, shares are burned and USDG amount are paid out first before being converted to the token I want to withdraw. Dividing the USDG amount by the burned GLP shares gives me the sale price. So if I could get the USDG amount and the corresponding GLP shares for each mint and burn, I could calculate the price. And I can get them from these two tables, where the date goes back to 31 Aug 2021 without gaps:

gmx_arbitrum.GlpManager_evt_AddLiquidity

gmx_arbitrum.GlpManager_evt_RemoveLiquidity

Once I get the prices from 31 Aug 2021 to 04 Jan 2023, I can stack them together with the prices from 04 Jan 2023 to the present using the union operator. I can then extract the day from the timestamp and get the daily median buy and sell prices with a group by. The final query is here. Star and fork it and play to your heart’s content. Also make sure you check out the data vis underneath the query and see how I configured them: decimals, labels, dollar signs, titles, and colors.

The path to Dune wizardry is paved with trial and error and tedious detective work. It is not for the faint heart. The bulk of the work is often not coding but understanding how a DeFi protocol works. So be prepared to spend a lot of time on the documentation and smart contracts.

Referrals

This section contains affiliate links to crypto products. If you click on the link and use the product, I may receive a small commission at no cost to you. I only link to products I use myself.

Digital Ocean is a cloud computing platform where you can rent remote servers for cheap. I have my remote data science server there. You can do the same and get $200 credit.

Mint.fun is a NFT aggregator/minting platform where you get points for minting and qualify for potential airdrop. Your mints will also get you Zora points for some NFTs.

Debank will likely do an airdrop in the near future. Better to start using it now.

Join the waitlist for Synthetix's newest frontend, Infinex. Team is considering a token and people on the waitlist may be rewarded.

People are speculating Reveel will airdrop like Arkham did because they are doing early user sign-up and waitlist just like Arkham did in its early days. Binance Labs invested in it and I heard they raised (or are in the process of raising) $2m. You can now use my referral link to sign up and hopefully be pleasantly surprised later. There’s nothing to lose.

Qualify for potential airdrop when trading on Hyperliquid.

Get 5% discount when trading on GMX.

PS: I put a lot of thoughts and time into this article, so if you got something out of it, can I ask you to buy me a coffee to help me stay awake?